Writing

Notes on research, methods, and ideas.

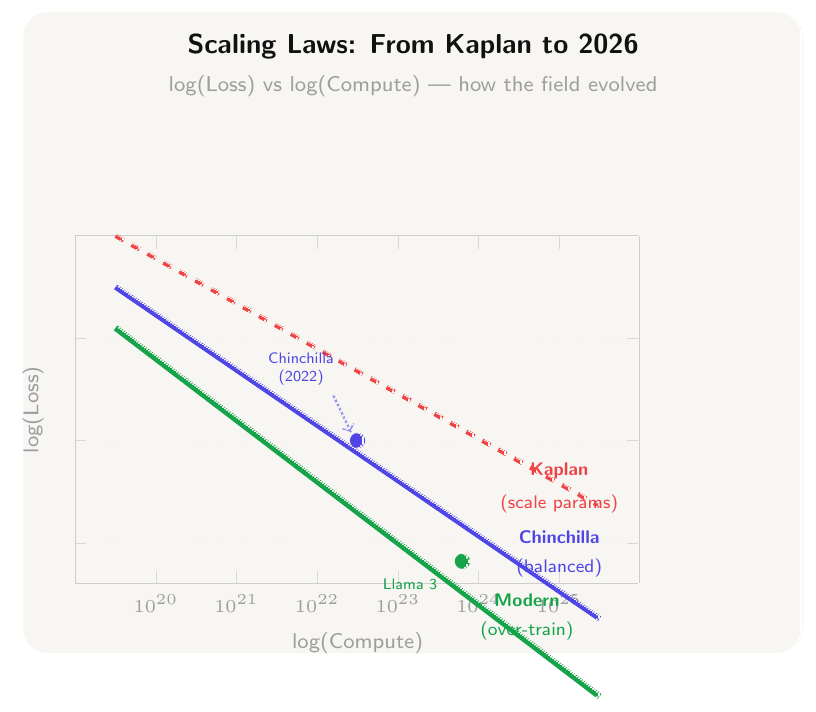

Scaling Laws for LLMs: From Chinchilla to 2026

The most expensive equations in AI determine how labs spend billions. Here's what they actually say — and where they're being rewritten. From Kaplan to Chinchilla to inference-time scaling.

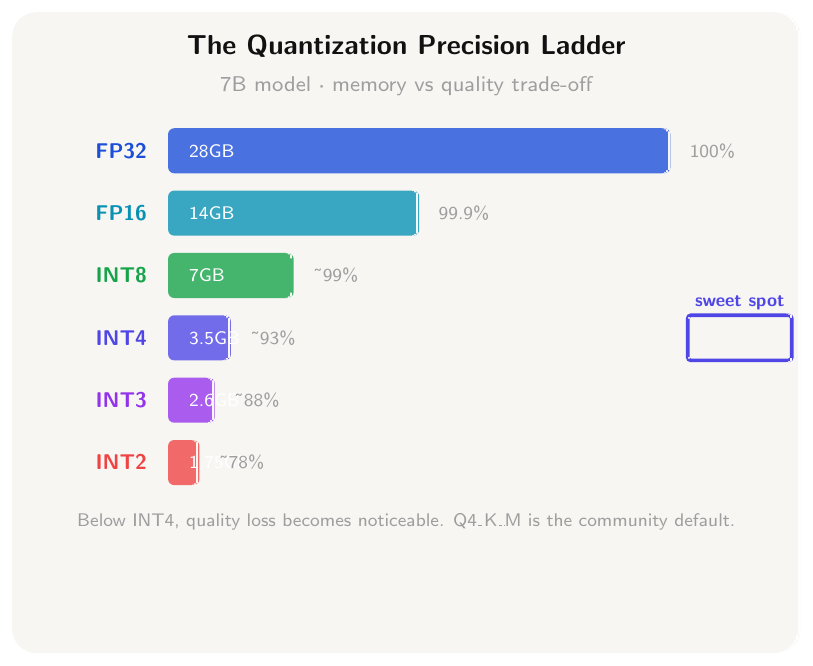

LLM Quantization Demystified: GGUF vs GPTQ vs AWQ

Your 7B model has 14 billion numbers. Here's exactly how to shrink them — and what you lose in the process. A practitioner's guide to choosing GGUF, GPTQ, or AWQ.

Mixture of Experts Explained: The Architecture Behind Every Frontier Model in 2026

How DeepSeek-R1, GPT-5, Gemini, and Mistral Large 3 all use the same trick — and what it means for your work. A complete conceptual and technical guide to MoE architecture.

Why Causal Inference Matters More Than Prediction in Development Research

Most ML models in global development optimize for prediction accuracy. I argue this is the wrong objective — and what we should be doing instead.

Neural Architecture Search for the Real World: Lessons from Edge Deployment

Building ML models that work on low-power edge hardware in climate-constrained settings taught me more about model design than any benchmark ever did.