LLM Quantization Demystified: GGUF vs GPTQ vs AWQ

April 28, 2026

Your 7B model has 14 billion numbers. Here’s exactly how to shrink them — and what you lose in the process.

The fundamental challenge facing anyone attempting to run LLMs locally is that most GPUs have less than 8 GB of memory while a 7B parameter model requires approximately 14 GB. Quantization solves this by reducing the model’s memory footprint at the expense of some precision. A 14 GB model compressed with INT4 quantization drops to about 4 GB — small enough to run on a consumer GPU.

However, quantization is not one-size-fits-all. There exists a complex ecosystem of techniques (GGUF, GPTQ, AWQ), file formats (Q4_K_M, IQ3_S, Q8_0), and tools (llama.cpp, vLLM, LM Studio, Ollama) that each claim different trade-offs between accuracy and memory usage. The goal of this guide is to clarify what actually happens to model weights during quantization, which method to use based on your hardware and use case, and the associated accuracy trade-off.

First Principles: What Happens to the Numbers

The Precision Ladder

Every parameter in a neural network is a floating-point value stored in binary. The precision of that number determines how much memory it consumes and how accurately it represents the learned weight:

| Precision | Bits per Weight | 7B Model Size | Relative Quality |

|---|---|---|---|

| FP32 | 32 | ~28 GB | 100% (baseline) |

| FP16 | 16 | ~14 GB | ~99.9% |

| INT8 | 8 | ~7 GB | ~99% |

| INT4 | 4 | ~3.5 GB | ~92–95% |

| INT3 | 3 | ~2.6 GB | ~85–90% |

| INT2 | 2 | ~1.75 GB | ~75–80% |

FP16/BF16 (16 bits) is the standard training and distribution precision — this is what you get when you download “the full model” from HuggingFace. INT8 delivers roughly 2x compression with minimal quality loss. INT4 is the sweet spot most practitioners target: 4x compression, and for most tasks the quality difference is barely noticeable. Below INT4 you start making real trade-offs.

The Core Math

Quantization maps a range of floating-point values to a smaller set of integers:

Quantize: q = round((w − zero_point) / scale)

Dequantize: w ≈ q × scale + zero_point

Think of it as rounding. The number 3.14159 rounded to two decimal places is 3.14 — you lost the last two digits, but the number is still close enough. Quantization is simply controlled rounding of billions of parameters.

Block quantization divides weights into small blocks (typically 32 to 128 weights) and computes a separate scale and zero_point for each block. This adapts to local weight distributions and significantly improves quality over global quantization.

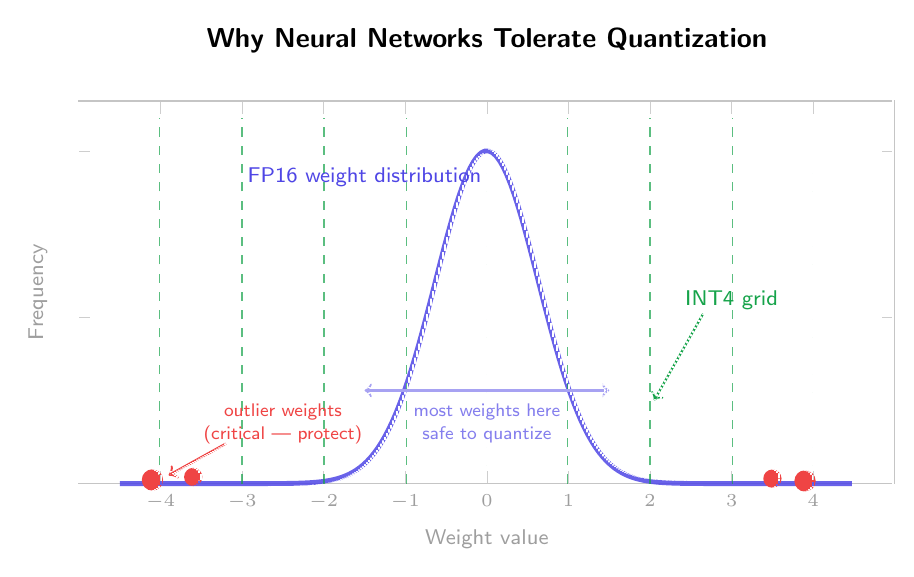

Why Neural Networks Tolerate Quantization

Neural network weights follow an approximate normal distribution — most weights lie close to zero, and there are only a few outliers with large values. Small weights contribute little to the layer’s computation, so quantization errors there have minimal impact. The problem lies in the outliers: a handful of very large weights are crucially important. Bad quantization of these outlier weights can cascade through the network. This insight separates good quantization methods from naive ones — AWQ, for example, identifies and explicitly protects these critical weights.

A Critical Clarification

GGUF is a file format, not a quantization method. It is a container that stores quantized weights along with metadata, model architecture, and the tokenizer — all in a single file. The actual quantization algorithms inside GGUF are K-quants, I-quants, and legacy quants.

GPTQ and AWQ are quantization algorithms — they describe how to reduce weight precision, not just where to store the result.

Takeaway: When someone says “GGUF Q4_K_M,” they mean a file in GGUF format that uses the K-quant algorithm at approximately 4-bit precision. When someone says “GPTQ 4-bit,” they mean a model quantized using the GPTQ algorithm at 4-bit precision, stored in GPTQ’s own format.

The Big Three: GGUF, GPTQ, and AWQ

GGUF — The Standard for Local Deployment

GGUF was developed by the llama.cpp project and is the successor to the earlier GGML format. It is a self-contained binary format combining quantized weights, architecture information, tokenizer data, and metadata into a single file. Download one file, point your inference engine at it, and run.

GGUF’s greatest strength is CPU + GPU hybrid inference. Unlike GPTQ and AWQ, which require GPU-only processing, GGUF models can split layers between GPU and CPU. Use your GPU to process 30 layers while running the remainder on your CPU — enabling you to run models that don’t fully fit in VRAM.

GGUF supports three generations of quantization algorithms:

Legacy Quants (Q4_0, Q5_0, Q8_0): Basic uniform quantization per block. Legacy reasons keep them around, but not recommended for quality-sensitive use.

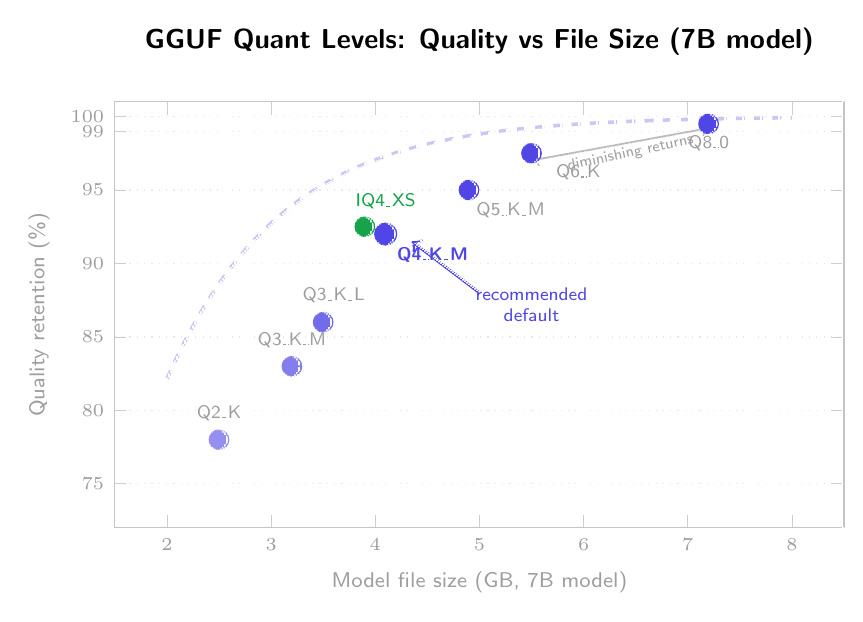

K-Quants (Q3_K_S through Q6_K): Mixed precision — critical layers (early attention, output projection) receive higher precision; less important layers get compressed more aggressively. The letter suffix denotes size: S(mall), M(edium), L(arge). Q4_K_M is the community sweet spot: approximately 92% quality retention at ~4.1 GB for a 7B model.

I-Quants (IQ1_S through IQ4_NL): Importance-based non-uniform quantization — allocate precision to weights based on their relative importance using non-uniform grids. IQ4_XS offers slightly better quality than Q4_K_M at a similar or smaller file size.

Unsloth Dynamic 2.0 develops model-specific quantization strategies — analyzing each model individually to determine which layers need more precision. Unsloth’s 3-bit DeepSeek V3.1 GGUF exceeds the performance of many full-precision models on coding benchmarks.

Best for: Local deployment, CPU inference, Apple Silicon, hybrid CPU+GPU configurations, LM Studio, Ollama, llama.cpp.

GPTQ — The GPU Inference Powerhouse

GPTQ (Generalized Post-Training Quantization) by Frantar et al. (2023) uses second-order information — the Hessian matrix — to determine which weights can be compressed most aggressively. It quantizes the model layer-by-layer using a calibration dataset of ~128 examples. For each layer, the Hessian indicates which weights the model is most sensitive to; those weights are compressed carefully, while insensitive weights are compressed aggressively.

Quality-wise, GPTQ achieves approximately 90% quality retention at 4-bit — respectable, though slightly lower than AWQ. Speed-wise: using optimized Marlin kernels, GPTQ reaches ~712 tokens/second vs. 461 tokens/second for FP16. Without Marlin, GPTQ runs at ~276 tokens/second. The kernel matters greatly.

The tradeoff: GPTQ requires calibration data and the conversion process is time-consuming. And GPTQ is GPU-only — no CPU inference path.

Best for: High-speed GPU server environments, production API endpoints, vLLM and TGI deployments where throughput matters.

AWQ — The Quality Champion

AWQ (Activation-Aware Weight Quantization) by Lin et al. (2024) identifies which weights have the greatest impact on the model’s output — not just by weight magnitude, but by the activation patterns they influence. Roughly 1% of weights are “salient” and preserved at higher precision. All other weights are quantized aggressively.

W4A16: 4-bit weights, 16-bit activations. AWQ does not quantize activations, which is a significant factor in its quality advantage. At 4-bit precision, AWQ retains roughly 95% of original quality — the highest of any 4-bit method. With Marlin kernels: ~741 tokens/second. Without Marlin: ~67 tokens/second. Like GPTQ, AWQ requires calibration and a CUDA GPU.

Best for: Maximum quality at 4-bit precision, production serving where quality is paramount, GPU-only deployments.

Head-to-Head Comparison

| Criterion | GGUF (Q4_K_M) | GPTQ (4-bit) | AWQ (4-bit) |

|---|---|---|---|

| Quality retention | ~92% | ~90% | ~95% |

| Speed (w/ Marlin) | 93 tok/s | 712 tok/s | 741 tok/s |

| CPU support | Yes | No | No |

| Calibration needed | No | Yes | Yes |

| Ease of use | Highest | Medium | Medium |

| Best served by | llama.cpp, LM Studio, Ollama | vLLM, TGI | vLLM, TGI |

Takeaway: GGUF wins on flexibility. AWQ wins on quality and speed (with proper kernels). GPTQ is the established middle ground for GPU serving. Running locally on consumer hardware: GGUF. Deploying a production API on dedicated GPUs: AWQ with Marlin.

Decoding the GGUF Alphabet

Naming Convention

- Q[bits]_[variant] — Legacy:

Q4_0,Q5_0,Q8_0 - Q[bits]K[size] — K-quants:

Q4_K_M,Q5_K_S,Q6_K - IQ[bits]_[variant] — Importance-based:

IQ4_XS,IQ3_S,IQ2_M

Reference Table (7B/8B models)

| Quant | Avg Bits | 7B Size | Quality | Recommendation |

|---|---|---|---|---|

| Q2_K | 2.6 | ~2.5 GB | Poor | Testing only |

| Q3_K_M | 3.9 | ~3.5 GB | Good− | Tight VRAM |

| Q4_K_M | 4.8 | ~4.1 GB | Good+ | RECOMMENDED DEFAULT |

| IQ4_XS | 4.3 | ~3.9 GB | Good+ | Better Q4 alternative |

| Q5_K_M | 5.7 | ~4.9 GB | Very Good+ | Quality priority |

| Q6_K | 6.6 | ~5.5 GB | Excellent | Near-lossless |

| Q8_0 | 8.5 | ~7.2 GB | Near-perfect | Maximum quality |

The Four Quants Most People Should Consider

Q4_K_M — “The Default.” Start here unless you have a compelling reason not to.

Q5_K_M — “When You Have Space.” Approximately 20% larger than Q4_K_M with a measurable quality improvement, especially on complex reasoning and fact retrieval.

IQ4_XS — “The New Default?” Same or smaller file size than Q4_K_M with better quality preservation due to non-uniform quantization grids. Use it if your inference engine supports it.

Q8_0 — “Nearly Lossless.” When quality is absolute and VRAM allows. Perplexity differences from FP16 are typically under 0.5%.

The KV Cache Trap

VRAM required = Model Size + KV Cache + Overhead

| Context Length | KV Cache |

|---|---|

| 4,096 tokens | ~500 MB – 1 GB |

| 8,192 tokens | ~1 – 2 GB |

| 32,768 tokens | ~4 – 8 GB |

On an 8 GB GPU, a 4.1 GB Q4_K_M model leaves ~3.9 GB for KV cache — enough for 4k context, tight for 8k, impossible for 32k without CPU offloading.

Which Quantization Do You Need?

API serving (high throughput, multiple users): AWQ + Marlin kernel — highest throughput and quality. GPTQ + Marlin is a reasonable alternative.

Interactive/local, 8 GB VRAM: GGUF Q4_K_M for 7B models (prefer IQ4_XS if supported).

12 GB VRAM: GGUF Q5_K_M for 7B, Q4_K_M for 13B.

16 GB VRAM: Q5_K_M for 13B, Q4_K_M for some MoE models like Mixtral 8x7B.

24 GB VRAM: Q6_K or Q8_0 for 7–13B, Q4_K_M for 30B+.

Apple Silicon or CPU only: GGUF is your only viable option. llama.cpp is heavily optimized for Apple’s unified memory architecture.

Code generation: Be conservative — Q5_K_M or higher. Code is more sensitive to quantization than natural language.

Fact Q&A: Prefer Q5_K_M or higher. Fact recall drops faster than fluency.

Chat/Conversation, Summarization, Creative Writing: Q4_K_M is acceptable.

For those who don’t care: Download the GGUF Q4_K_M version of your model and use LM Studio or Ollama. 90% of users will be fine.

Get Running in 5 Minutes

LM Studio (2 minutes)

Lowest friction. Download LM Studio, find your model in the built-in browser, select GGUF Q4_K_M, click Download. No command line, no configuration, no dependencies.

Ollama (3 minutes)

ollama run qwen2.5-coder:7b-instruct-q4_K_M

Ollama handles the download, configuration, and serving automatically.

llama.cpp (5 minutes, maximum control)

./llama-server -m model-Q4_K_M.gguf -c 4096 -ngl 35

The -ngl 35 flag moves 35 layers to GPU. -c 4096 sets context window size. For Python:

from llama_cpp import Llama

llm = Llama(

model_path="./qwen2.5-coder-7b-instruct-Q4_K_M.gguf",

n_ctx=4096,

n_gpu_layers=35,

verbose=False

)

response = llm.create_chat_completion(

messages=[{"role": "user", "content": "Write a Python function to merge two sorted lists."}],

max_tokens=512,

temperature=0.7

)

print(response["choices"][0]["message"]["content"])

No API keys, no cloud costs, no data leaving your machine.

Quick-Reference Cheat Sheet

DEFAULT: GGUF Q4_K_M via LM Studio or Ollama

QUALITY: AWQ 4-bit via vLLM (requires GPU)

SPEED: AWQ or GPTQ with Marlin kernel via vLLM

BUDGET: GGUF Q3_K_M or IQ3_S

NEAR-LOSSLESS: GGUF Q8_0

References

- Frantar et al. (2023). “GPTQ: Accurate Post-Training Quantization for Generative Pre-trained Transformers.” arXiv:2210.17323

- Lin et al. (2024). “AWQ: Activation-aware Weight Quantization for On-Device LLM Compression and Acceleration.” arXiv:2306.00978

- Dettmers et al. (2024). “QLoRA: Efficient Finetuning of Quantized Language Models.” arXiv:2305.14314

- llama.cpp project. github.com/ggerganov/llama.cpp

- Unsloth Dynamic 2.0 GGUFs. unsloth.ai