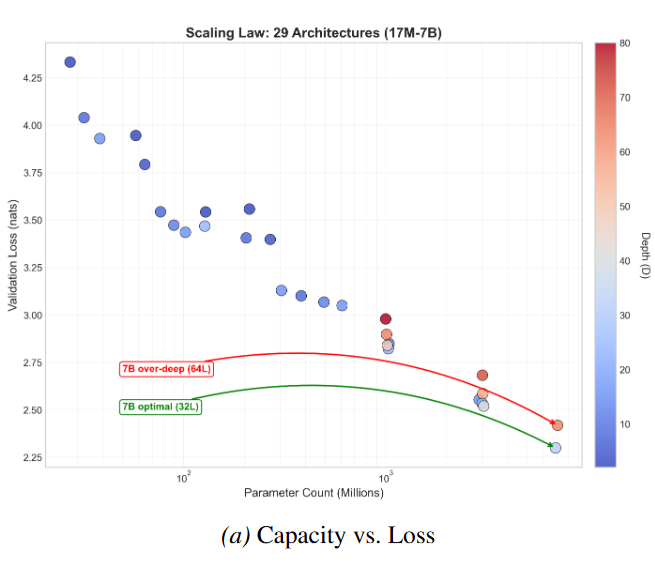

Width should grow 2.8× faster than depth in transformers — validated across 30 architectures up to 7B parameters. Past a critical depth, adding layers actively hurts, even though parameter count increases.

Abstract

Neural scaling laws describe how language model loss decreases with parameters and data, but treat architecture as interchangeable. We propose architecture-conditioned scaling laws decomposing depth-width dependence, finding that optimal depth scales as D* ~ C^0.12 while optimal width scales as W* ~ C^0.34, meaning width should grow 2.8× faster than depth. We discover a critical depth phenomenon: beyond D_crit ~ W^0.44 (sublinear in W), adding layers increases loss despite adding parameters—the Depth Delusion. Validated across 30 transformer architectures spanning 17M to 7B parameters (R² = 0.922), our central finding is that at 7B scale a 64-layer model (6.38B params) underperforms a 32-layer model (6.86B params) by 0.12 nats, despite being significantly deeper—demonstrating that optimal depth-width tradeoffs persist at production scale.