Mixture of Experts Explained: The Architecture Behind Every Frontier Model in 2026

April 20, 2026

How DeepSeek-R1, GPT-5, Gemini, and Mistral Large 3 all use the same trick — and what it means for your work

One number to consider here is that by early 2026, more than 60% of the top-scoring open-source AI models shown on the Artificial Analysis leaderboard use a Mixture of Experts (MoE) architecture. Dense transformers and scale-ups of GPT-3 are common, but MoE is not. MoE.

The list of MoE-based models resembles a who’s-who of leading-edge AI models, including DeepSeek-R1; 671 billion total parameters; 37 billion active parameters per token. Mistral Large 3; 675 billion total parameters; 41 billion active parameters per token. Zhipu GLM-5; 744 billion total parameters; approximately 44 billion active parameters per token. Llama 4 from Meta. GPT-5; which many people believe also uses MoE. Gemini 3.1 Pro. Models such as these are not experimental curiosities — they are the production workhorses upon which millions of developers rely every day.

However, most ML courses and tutorials still present the dense transformer as the default architecture. The gap between what the textbooks describe, and what actually runs behind the majority of API endpoints of the major LLM providers has never been larger.

The goal of this post is to close this gap. Upon completion of this post, you will have a complete conceptualization of MoE — what it is, how the routing mechanism and the expert mechanisms function inside a transformer, why it is dominating the quality-efficiency frontier, and when you should (or should not) use it in your own development work. If you are developing using LLMs in 2026 and do not understand MoE, then you are using a mental model of AI that is already out-of-date.

Dense vs. Sparse: Why Do We Want to Activate All Parameters?

A dense model can be thought of as a hospital where every doctor treats every patient. You enter the hospital with a broken arm, and the cardiologist, neurosurgeon, dermatologist, and oncologist all offer opinions before you receive a cast. Complete, possibly; efficient, certainly not. The total medical expertise in the hospital is large, however, nearly all of it is irrelevant to your specific visit.

Consider another hospital — with the same number of doctors, and the same total expertise, but with a triage nurse at the front door. The triage nurse evaluates you, determines that you require orthopedic care, and possibly radiologic evaluation, and directs you to those departments. The other specialists remain available for the next patient that requires their services.

The triage nurse is the router. The specialized departments are the experts. The hospital is the Mixture of Experts model.

In a standard dense transformer, every token — regardless of whether it is the word “the” or a complicated mathematical formula — is passed through all parameters in every layer. Therefore, a 70 billion parameter dense model applies all 70 billion parameters to every single token. There is no selection. There is no specialization. Only a uniform application of brute-force computation.

In contrast, in an MoE model, each token is directed to a subset of specialized sub-networks. The model may include 671 billion parameters in total, but only 37 billion parameters are activated for any given token. The remainder wait for tokens that require their services.

Dense model (e.g., Llama 3 70B): 70B total parameters, 70B active per token. Every parameter participates in every computation.

MoE model (e.g., DeepSeek-R1): 671B total parameters, 37B active per token. Approximately 5.5% of the model’s resources are used per token. Which subset of resources is used is determined dynamically by a learned router.

The key economic principle underlying MoE is the following, and it is the single most important concept presented in this entire post:

Model quality is generally proportional to total parameters — the quantity of knowledge represented in the weights. Model cost is generally proportional to active parameters — what you actually compute per token. MoE decouples these two quantities: you obtain the quality of a large model at the computational cost of a much smaller model.

The Three Pillars: Experts, Routers, and Load Balancing

Expert Networks

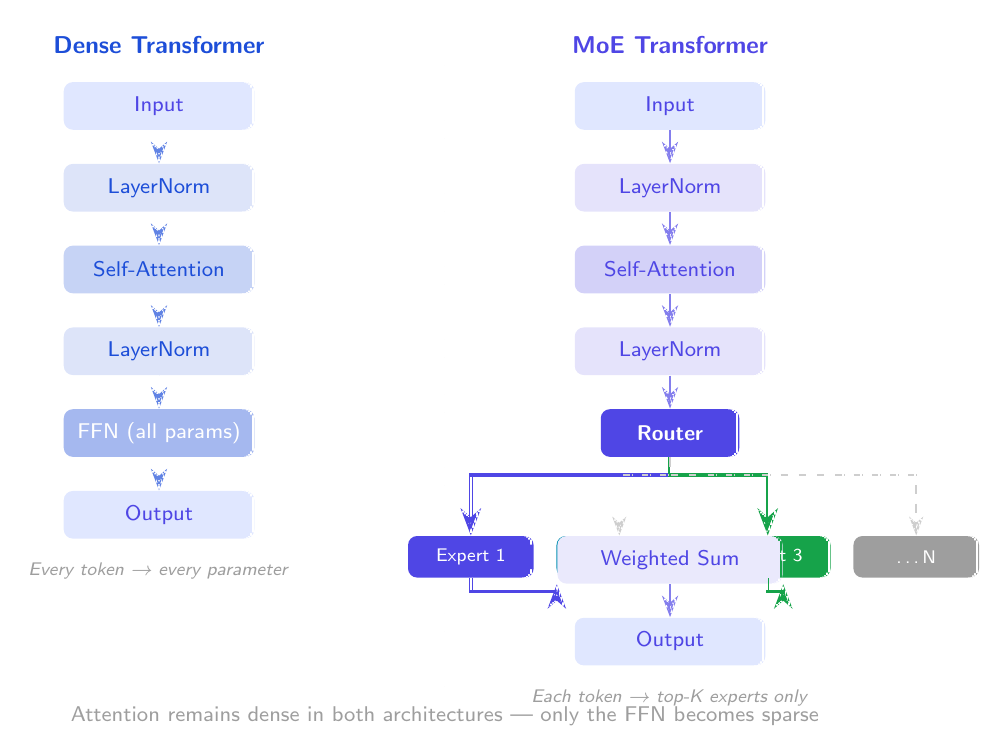

The most common misconception about MoE is that experts are entire language models — separate, independent systems stitched together. They are not. An expert is simply a standard feed-forward network (FFN), the same kind of FFN that exists in every transformer block. The only difference is replication: instead of one FFN per layer, you have N parallel FFNs. Each one is an expert.

Self-attention is unchanged. It remains dense across all tokens in every current MoE model. The MoE modification touches only the feed-forward component.

Here is the structural comparison:

Standard Transformer Block:

Input → LayerNorm → Self-Attention → LayerNorm → FFN → Output

MoE Transformer Block:

Input → LayerNorm → Self-Attention → LayerNorm → Router → [Expert₁, Expert₂, ... Expert_N] → Weighted Sum → Output

Typical expert counts vary significantly across architectures:

| Model | Experts per MoE Layer | Active per Token |

|---|---|---|

| Mixtral 8x7B | 8 | 2 |

| Switch Transformer | 128 | 1 |

| DeepSeek-R1 | 128 (+ shared) | 6 (+ shared) |

| Zhipu GLM-5 | 256 | 8 |

Each expert has the same architecture — identical hidden dimensions, same activation function — but different learned weights. Specialization is not hard-coded. It emerges through training, as different experts receive different gradients and converge on different functions.

The Router / Gating Network

The router is the mechanism that decides which experts process which tokens, and it is surprisingly simple. In most implementations, the gating network is a single linear layer followed by a softmax — a small learned neural network with one job: produce a probability distribution over all N experts for each incoming token.

The routing decision comes down to Top-K selection. After the router produces probabilities for all experts, the model picks the K experts with the highest scores:

- Top-1: each token goes to exactly one expert (the Switch Transformer approach — fastest, but fragile)

- Top-2: each token goes to two experts, and their outputs are combined via a weighted sum (the Mixtral approach, and the most common choice)

- Top-K > 2: multiple experts per token (DeepSeek-R1 uses top-6 out of 128 — still only 4.7% of experts)

The math is clean. For a hidden state x, the gating function is:

g(x) = softmax(W_g · x)

output = Σ_{i ∈ TopK} g(x)_i · Expert_i(x)

Here is a simplified PyTorch-style implementation of the router:

import torch

import torch.nn as nn

import torch.nn.functional as F

class Router(nn.Module):

def __init__(self, hidden_dim: int, num_experts: int, top_k: int = 2):

super().__init__()

self.gate = nn.Linear(hidden_dim, num_experts, bias=False)

self.top_k = top_k

def forward(self, x: torch.Tensor) -> tuple[torch.Tensor, torch.Tensor]:

logits = self.gate(x)

probs = F.softmax(logits, dim=-1)

top_k_probs, top_k_indices = probs.topk(self.top_k, dim=-1)

top_k_probs = top_k_probs / top_k_probs.sum(dim=-1, keepdim=True)

return top_k_indices, top_k_probs

Load Balancing

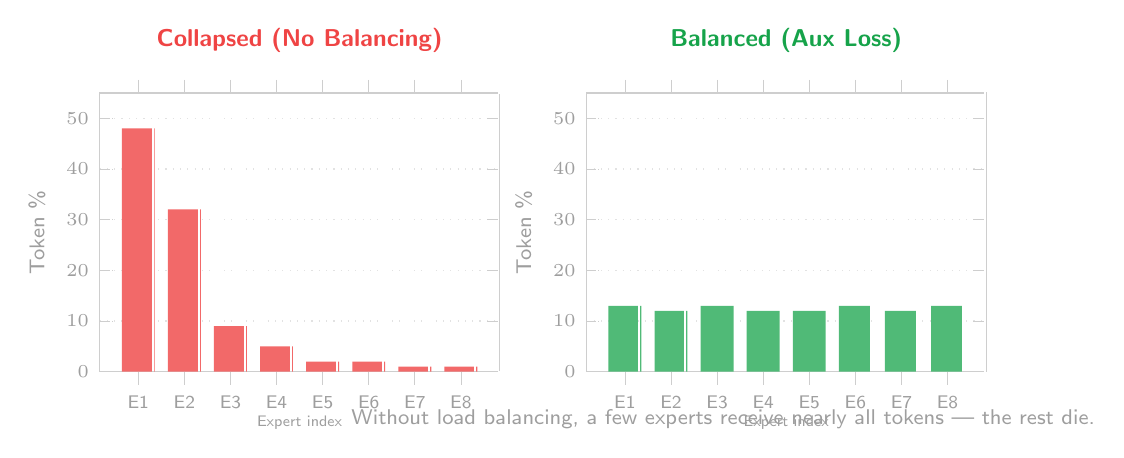

MoE training will collapse unless you do something about it. Experts become “popular” — they get slightly better gradients at the beginning of training, which make them slightly better, which causes the router to send more tokens to them, which gives them even more gradient signal. Meanwhile, the rest of the experts never move forward.

Auxiliary load-balancing loss (Fedus et al., 2021):

L_balance = α · N · Σᵢ (fᵢ · pᵢ)

where fᵢ is the fraction of tokens sent to expert i and pᵢ is the average router probability for expert i. If every expert gets roughly the same number of tokens, L_balance will be small.

Expert capacity limits simply cap the number of tokens any single expert can process in a batch. Tokens that overflow either get discarded or bypass through the residual connection.

DeepSeek’s auxiliary-loss-free approach uses learned bias terms to control routing without any penalty term in the training objective — eliminating the notorious finicky hyperparameter α.

Designing Your MoE Model

Number of Experts

The field has coalesced around a few sweet spots: 8 (Mixtral 8x7B), 64, and 128 (DeepSeek-R1). DeepSeek-V2 introduced the concept of fine-grained experts — breaking what would typically be a standard-size FFN into numerous smaller experts. With 128 fine-grained experts and top-6 routing, there are over 5.4 billion combinations of 6 experts — a huge space for the model to find meaningful specializations.

Top-K Value

Top-1 is fastest but brittle. Top-2 is the most commonly implemented practical value. Top-6 or greater (such as DeepSeek-R1) provides high quality at increased compute cost. Even so, DeepSeek-R1 activates only 4.7% of its parameter space per token — far sparser than Mixtral at 25%.

Shared vs. Routed Experts

DeepSeek-V2 introduced shared experts — FFNs that are always active for every token — alongside selectively activated routed experts. The shared experts provide a permanent home for universal knowledge (syntax, common word relationships, general reasoning templates) rather than distributing it among whatever routed experts happen to see common tokens.

| Scenario | Experts | Top-K | Shared Experts | Layer Frequency |

|---|---|---|---|---|

| Quick prototype | 8 | 2 | No | Every other layer |

| Production quality | 64–128 | 4–6 | Yes | Every other layer |

| Edge deployment | 4–8 | 1–2 | No | Every 4th layer |

Case Study: DeepSeek-R1

Specifications:

- 671 billion total parameters, 37 billion active per token

- 128 routed experts per MoE layer plus shared experts

- Top-6 Routing — 6 out of 128 experts per token

- Fine-grained expert segmentation

- Auxiliary-loss-free load balancing

- MIT License — fully open weights and documentation

DeepSeek-R1 is very close to GPT-4o on mathematics, coding, and general reasoning tasks. The inference cost for DeepSeek-R1 is roughly equivalent to a 40 billion parameter dense model. One honest limitation: all 671 billion parameters must be loaded into memory, even if only 37 billion are active per token. At FP16 precision, the entire model requires roughly 1.3 terabytes of VRAM.

Deploying MoE on Consumer Hardware

| VRAM | What You Can Run |

|---|---|

| 8 GB | Mixtral 8x7B at Q3–Q4 (with CPU offload) |

| 16 GB | Mixtral 8x7B at Q4_K_M comfortably |

| 24 GB | Mixtral at Q5–Q6, many medium MoE models at Q4 |

With 16GB of VRAM you can run either a dense 13B model at Q4 or Mixtral 8x7B (~13B active) at Q3–Q4. MoE usually wins because there’s far more knowledge stored in the weights at the same active compute cost.

Practical Recommendation: When deciding between a dense model and an MoE model with the same VRAM, try the MoE version. You get more knowledge per byte of memory.

What We Still Don’t Know

Why do experts specialize? No one told Expert #47 to handle code. It emerged from training. We don’t have a satisfactory theory for why specialization appears so clearly.

Can we apply NAS to MoE? The number of experts, expert size, Top-K value, layer placement — all are currently decided by human researchers through ablation studies. The MoE design space is massive and almost completely unexplored by automated architecture search.

Will attention become sparse too? All current MoE models keep attention dense. A doubly sparse transformer — sparse attention and sparse FFN — is the natural next step, though combining the two routing mechanisms adds significant complexity.

Key Takeaway

MoE is not a hack. It is a well-founded architecture that separates model quality from model cost by activating only the parameters each token needs. As of 2026, MoE is the standard architecture for cutting-edge LLMs, and understanding its internals — experts, routers, load balancing, design trade-offs — is no longer optional for anyone working seriously with large language models.

Essential Reading

- Shazeer et al. (2017) — “Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer”

- Fedus, Zoph & Shazeer (2021) — “Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity”

- Jiang et al. (2024) — “Mixtral of Experts”

- DeepSeek-AI (2024) — “DeepSeek-V2: A Strong, Economical, and Efficient Mixture-of-Experts Language Model”

- DeepSeek-AI (2025) — “DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning”

- Clark et al. (2022) — “Unified Scaling Laws for Routed Language Models”