Scaling Laws for LLMs: From Chinchilla to 2026

May 4, 2026

The most expensive equations in AI determine how labs spend billions. Here’s what they actually say — and where they’re being rewritten.

The Most Expensive Equation in AI

When a lab wants to deploy a new frontier model, one critical decision takes place before a single GPU is fired up: how to use the available compute budget.

The lab has X amount of FLOPS to spend. What is the optimal size of the model? What is the maximum number of tokens to train on? How long should training run?

If these questions are answered incorrectly, you risk wasting months of training time and tens of millions of dollars. A model too large for its data budget will overfit or underperform a model trained on fewer tokens but sized correctly. A model too small for its compute budget leaves already-paid-for performance on the table.

If model size and training data are properly allocated, the outcome is a model quality prediction before training begins — and often before the architecture is finalized.

Scaling Laws allow this prediction. They are mathematical representations of relationships between compute (C), model size (N), training data (D), and model quality (loss, L). They provide a mathematical basis for the most expensive decisions in AI.

However, few people realize that the understanding of scaling laws has dramatically changed since the Chinchilla paper (Hoffmann et al., 2022). The contemporary understanding includes over-training economics, architectural effects, inference costs, and inference-time compute — none of which were addressed by Chinchilla. If your conceptualization of scaling laws stopped at “20 tokens per parameter,” you are missing a significant part of the picture.

The Basic Ingredients: N, D, C, and L

What IS a Scaling Law?

A scaling law is a mathematical relationship describing how model performance changes as you vary key inputs:

- N = number of parameters (model size)

- D = number of training tokens (dataset size)

- C = total compute budget in FLOPS (approximately C ≈ 6ND for dense transformers)

The relationships are power laws:

L(N) ~ N^(−α) and L(D) ~ D^(−β)

Loss decreases as a power of N and D. There is no plateau in the range tested so far — just smooth, predictable diminishing returns. Plot loss against compute on a log-log scale, and you get a straight line. Reliably.

The Kaplan et al. (2020) Discovery

“Scaling Laws for Neural Language Models” by Kaplan et al. at OpenAI was the paper that started it all. Key findings from training hundreds of models across seven orders of magnitude of compute:

- L(N) ~ N^(−0.076): loss decreases as a power law with model size

- L(D) ~ D^(−0.095): loss decreases as a power law with data size

- L(C) ~ C^(−0.050): loss decreases as a power law with compute

The critical claim was about optimal allocation:

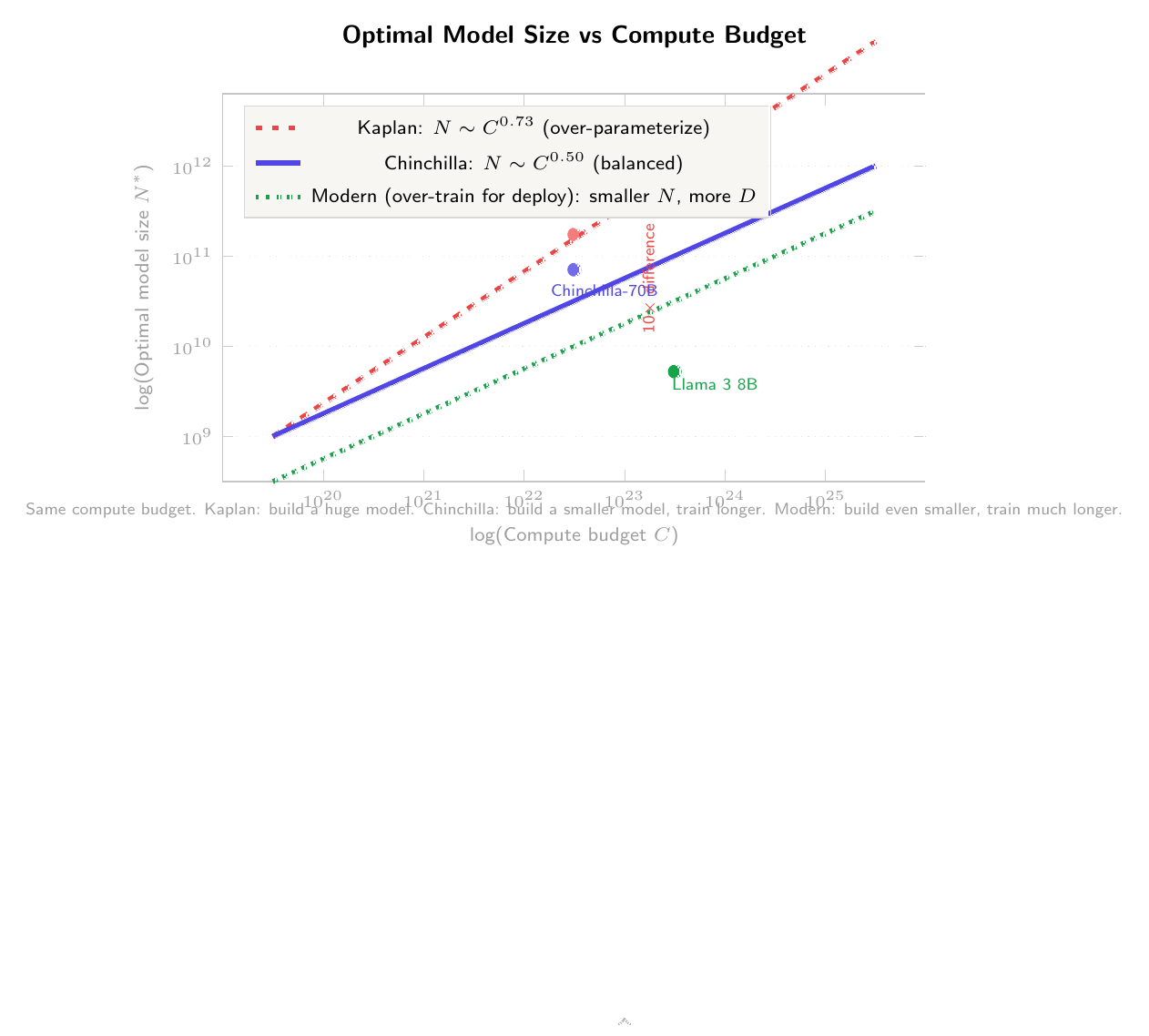

N_opt ~ C^0.73, D_opt ~ C^0.27

In plain English: if you get 10× more compute, make the model roughly 5× bigger and train on roughly 2× more data. This justified the “scale up parameters” paradigm that produced GPT-3 (175B parameters, trained on only 300B tokens).

Making It Tangible

With a budget of 10^21 FLOPS:

- Under Kaplan: train a ~100B parameter model on ~300B tokens

- Under Chinchilla (spoiler): train a ~10B parameter model on ~3T tokens

That is a 10× difference in model size for the same compute budget — translating directly to billions of dollars in GPU procurement and infrastructure.

Chinchilla: The Paper That Changed Everything

Hoffmann et al. from DeepMind produced “Training Compute-Optimal Large Language Models” (2022). The correction: Kaplan was far too liberal scaling model size and too conservative scaling data.

Chinchilla’s results:

N_opt ~ C^0.50, D_opt ~ C^0.50

N and D should grow about equally with compute. The practical rule of thumb: for every parameter, train on around 20 tokens. A 7B model requires ~140B tokens. A 70B model requires ~1.4T tokens.

The evidence was conclusive. Chinchilla-70B, trained on 1.4T tokens, outperformed the 280B-parameter Gopher model trained on only 300B tokens under the old Kaplan formula. A model with one-fourth the parameters beat a model four times its size — simply because it was trained on the right amount of data.

Why did Kaplan get it wrong? Kaplan et al. used a fixed learning rate schedule across all scaling studies. This unintentionally rewarded larger models at shorter training runs. When Chinchilla used a cosine learning rate schedule optimized per model size, the optimal allocation shifted significantly toward more data.

The industry effect was immediate:

- It ended the “just scale up parameters” era

- It led directly to Meta’s Llama strategy: smaller, better-trained models

- It elevated data teams to the same importance as modeling teams

| Kaplan (2020) | Chinchilla (2022) | |

|---|---|---|

| N scaling | N ~ C^0.73 | N ~ C^0.50 |

| D scaling | D ~ C^0.27 | D ~ C^0.50 |

| 10^21 FLOPS → N | ~100B params | ~10B params |

| 10^21 FLOPS → D | ~300B tokens | ~3T tokens |

| Philosophy | Bigger models | More data |

Three Corrections to Chinchilla That Reshaped Modern Training

Chinchilla was right about a lot. But it optimized for a world where training cost is all that matters.

Correction 1: Over-Training for Deployment

Chinchilla answers: What is the optimal model for a fixed training compute budget? But you don’t train a model and discard it — you deploy it, possibly hundreds of millions of times. Inference costs typically dominate training costs.

If you over-train a 7B model on far more tokens than the Chinchilla-optimal 140B, you get a model worse than a 70B Chinchilla-optimal model — but 10× cheaper per query. For most deployment scenarios, the total cost (training plus lifetime inference) of the smaller, over-trained model is lower.

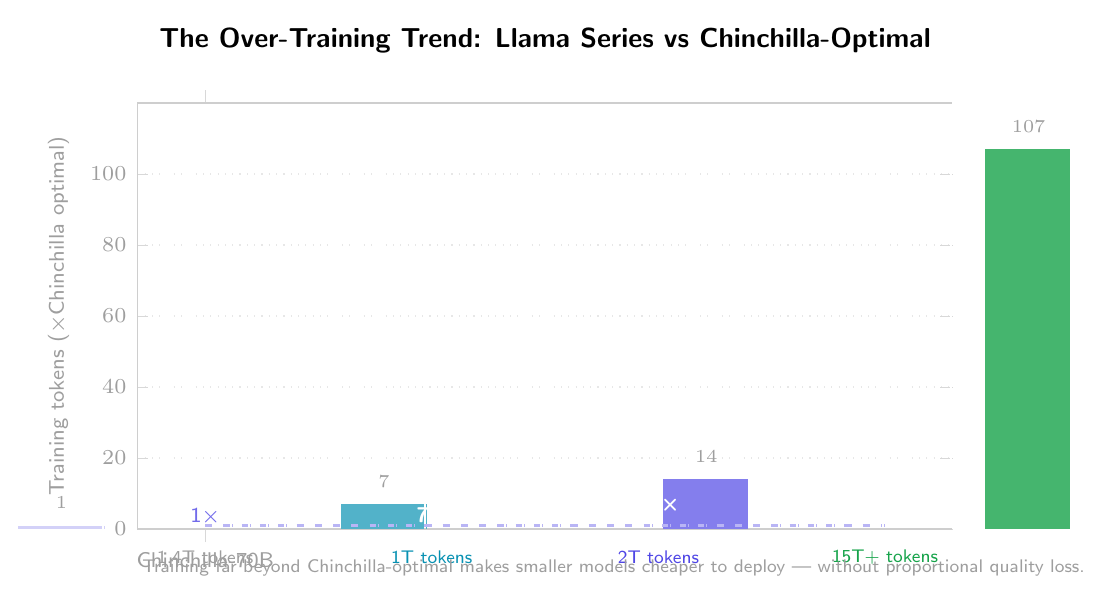

Meta’s Llama series exemplifies this:

- Llama 1 (7B): Trained on 1T tokens (~7× Chinchilla-optimal)

- Llama 2 (7B): Trained on 2T tokens (~14× Chinchilla-optimal)

- Llama 3 (8B): Trained on 15T+ tokens (~100× Chinchilla-optimal)

Sardana and Frankle (2024) formalized this in “Beyond Chinchilla-Optimal”: the more queries you anticipate, the smaller the model should be and the more it should be over-trained.

Modified Rule: Build the smallest model that satisfies your quality goals, using as much data as needed. This is now the de facto industry standard.

Correction 2: Data Quality and the Data Wall

Scaling laws assume an effectively unlimited supply of training data. Reality is less accommodating.

Quality varies greatly among web-scraped texts, and high-quality data (well-written, factual, diverse, non-repetitive) is finite. The total amount of good-quality English text on the Internet is estimated at 3–10 trillion tokens depending on quality standards. For frontier models training on 15T+ tokens, this is a significant limiting factor.

Variations in data quality shift the scaling curve upward: training on curated data yields better performance per FLOP than training on uncurated web crawl. Microsoft’s Phi series demonstrated this dramatically — Phi-1 and Phi-2 achieved surprisingly good performance with small models by using “textbook-quality” data, implying that data quality can partially substitute for data volume.

Correction 3: Architecture-Conditioned Scaling

Standard scaling laws treat the model as a black box — N parameters, regardless of how they’re allocated. But how you allocate parameters matters.

Two 7B models with different depth/width ratios will achieve different loss values for the same compute budget. Recent studies show that optimal width grows approximately 2.8× faster than optimal depth — as models increase in size, they should be made proportionally wider, not deeper. Chinchilla-type laws that ignore architecture leave performance on the table.

MoE architectures add a further dimension. Clark et al. (2022) showed that MoE models have fundamentally different optimal compute allocation than dense models — expert count, expert size, and routing granularity all interact with the traditional N/D trade-off.

The take-away: we are transitioning from “How Big?” to “How Big, in What Shape?” — and this remains an immature area.

The New Frontier: Scaling Thinking, Not Just Training

The most important paradigm shift of 2024–2026 is this: instead of scaling training compute, scale inference compute — how long the model “thinks” before answering.

Traditional scaling is straightforward: more training FLOPS produces a better model. Once deployed, quality is fixed regardless of question difficulty.

Models like OpenAI’s o1/o3 and DeepSeek-R1 are trained (via reinforcement learning on reasoning tasks) to use extra inference compute:

- Chain-of-thought reasoning: intermediate reasoning steps are generated before the final answer

- More tokens produced equals more “thinking time” equals higher accuracy on difficult problems

- Dynamic compute allocation: the model can spend more time on hard questions and less on easy ones

The scaling behavior is remarkable:

Accuracy on difficult problems (math, code, science) scales log-linearly with inference compute. Doubling “thinking time” produces a roughly constant accuracy increase.

A smaller model that thinks longer can match or beat a larger model that answers immediately — provided the problem benefits from extra reasoning. A 7B parameter reasoning model can outperform a 70B non-reasoning model on mathematical reasoning, if the task is difficult enough.

This creates a new trade-off: model size vs. thinking budget.

Caveat: Inference-time scaling is most effective on problems with verifiable answers — math, code, formal logic. On open-ended generation or creative writing, the benefits are less clear. It is difficult to “think harder” about a poem.

Practical Lessons for Resource-Limited Researchers

Scaling laws do not say “you cannot compete with small models.” They say “here is exactly how to get the most out of every FLOP you have.”

1. Over-train aggressively. Don’t stop a 1B parameter model at 20B tokens. Continue to 200–500B tokens. The marginal cost of additional tokens is low compared to the marginal quality gain.

2. Data quality is your biggest lever. At small model sizes, data quality matters more than at large scale. A large model can partially compensate for low-quality data through sheer capacity; a small model cannot. Invest in a high-quality, curated pipeline before investing in more compute.

3. Task-specific scaling behaviors differ. Scaling laws for next-token prediction don’t necessarily apply to classification, retrieval, or structured prediction. Small encoders (BERT-class) often remain competitive for these tasks. Don’t assume a 7B decoder is always better than a 350M encoder for your downstream task.

4. Architectural choices have amplified effects at small scale. At 70B parameters, poor architecture is partially masked by sheer scale. At 1–7B parameters, architecture becomes critical. Width-depth optimization and efficient attention yield their greatest returns precisely where compute is most constrained.

5. Inference-time scaling is your asymmetric advantage. A capable small reasoning model with a generous thinking budget can outperform a 10× larger dense model on hard problems. Researchers constrained by compute can compete on hard task performance without frontier-scale training.

What We Still Don’t Know

Is scaling continuing at the frontier? Apparent plateaus on MMLU and GSM8K likely reflect benchmark saturation rather than fundamental limits — newer, harder benchmarks (FrontierMath, ARC-AGI, GPQA) show continuous scaling behavior.

Will synthetic data extend or break scaling? Code and math-based tasks appear more amenable to synthetic data augmentation than general natural language. Model collapse from naive training on self-generated data remains a real risk; whether careful synthetic data generation extends the data wall is an open question.

Are Sutton’s “bitter lessons” still valid? Sutton argued that simple methods + scale would always outperform clever methods + less scale. Recent results complicate this: innovations in MoE, state-space models, data curation, and inference-time scaling are providing advantages beyond pure scale. The emerging consensus: scale is necessary but no longer sufficient — you must scale intelligently.

Conclusion

The history of scaling laws is a process of continually refined understanding. Kaplan identified the power-law relationships and suggested aggressive parameter scaling. Chinchilla showed that data should increase equally with parameters. Since Chinchilla, the field has moved through over-training economics, data quality as a first-class variable, architecture-conditioned scaling, and — most recently — inference-time scaling as an entirely new performance dimension.

The overall lesson: scaling laws are themselves evolving. The researchers who know the current state of these laws — not just the Chinchilla headlines — have a competitive advantage regardless of budget. The single most important shift is from one-dimensional scaling (“make it bigger”) to multi-dimensional scaling (“make it the right size, the right shape, trained on the right data, with the right amount of thinking time”). This makes the optimization problem harder, but creates far more opportunities for smart, resource-limited researchers to find an advantage.

Recommended Reading

- Kaplan et al. (2020). “Scaling Laws for Neural Language Models.” arXiv:2001.08361

- Hoffmann et al. (2022). “Training Compute-Optimal Large Language Models.” arXiv:2203.15556

- Sardana & Frankle (2024). “Beyond Chinchilla-Optimal: Accounting for Inference in Language Model Scaling Laws.” arXiv:2401.00448

- Clark et al. (2022). “Unified Scaling Laws for Routed Language Models.” arXiv:2202.01169

- Muennighoff et al. (2024). “Scaling Data-Constrained Language Models.” arXiv:2305.16264

- Gunasekar et al. (2023). “Textbooks Are All You Need.” arXiv:2306.11644

- Snell et al. (2024). “Scaling LLM Test-Time Compute Optimally Can Be More Effective Than Scaling Model Parameters.” arXiv:2408.03314